One Simple Product Position Change Drove 27.6% Revenue Growth for Cybersecurity Leader

Enterprise Network Protection Specialist

Last Updated: April 30, 2026 | Reviewed by Devon Cox, President, ConversionTeam

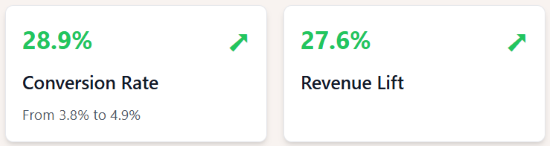

Highlights

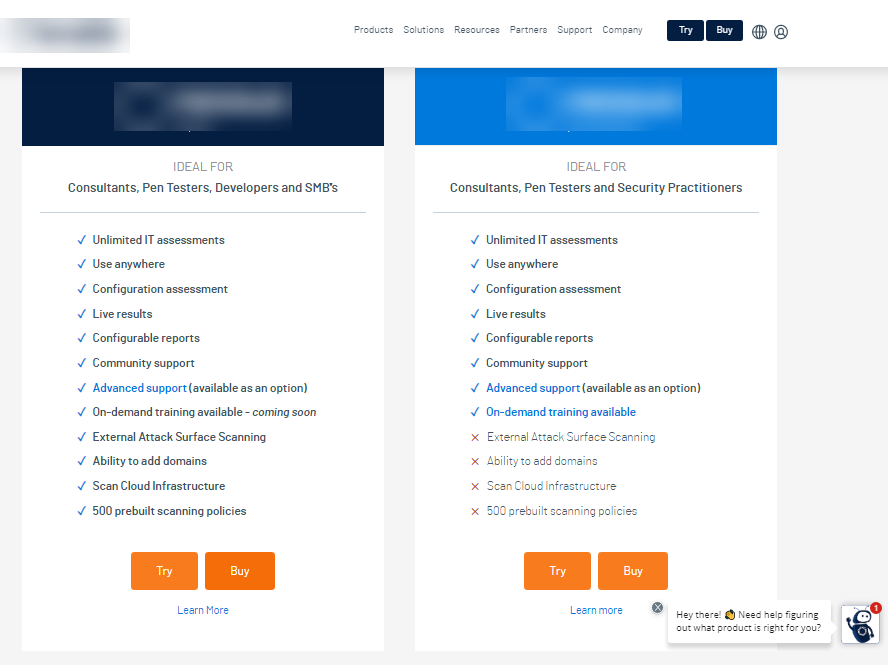

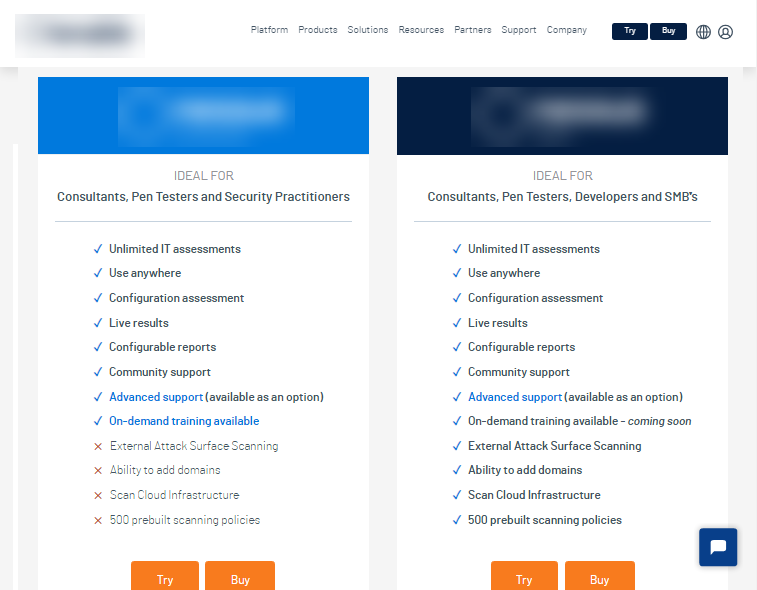

A leading enterprise cybersecurity company was struggling with suboptimal selection rates for their premium "Expert" version of their vulnerability scanner software. Many customers defaulted to the less expensive "Pro" version simply because it appeared first on the page.

Pricing-tier ordering tests reliably move buyers toward higher tiers when the premium tier has genuine additional value - 27.6% revenue lift from one swap here. The kind of pricing-page test rigor that separates specialist best CRO agencies from agencies running generic landing-page playbooks.

We implemented a straightforward test that swapped the positions of the two product offerings, displaying the premium "Expert" option before the standard "Pro" option. This simple change had a dramatic effect on purchasing behavior.

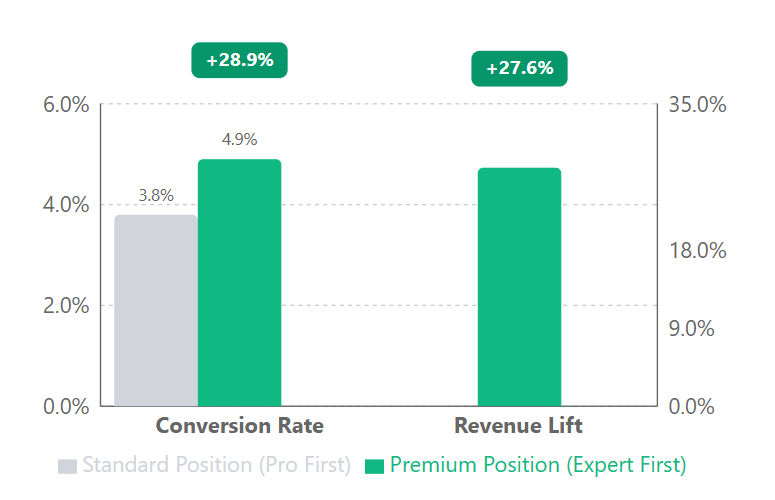

- 28.9% increase in conversion rate (from 3.8% to 4.9%)

- 27.6% uplift in revenue per user with 99% statistical confidence

- 22% lift in revenue projections, translating to significant additional income

Our Strategy 💡

Our hypothesis was simple: By repositioning the premium product (Expert) before the standard product (Pro), we could increase visibility of the higher-priced option and drive more customers to select it.

The implementation was straightforward:

- Swapped the positions of the two product offerings on the page

- Maintained identical content and pricing for each product

- Ran the test across all devices with no specific user segmentation

The Results

| Metric | Control | Variation | Lift |

|---|---|---|---|

| Users | 11,166 | 10,940 | - |

| Transactions | 428 | 535 | - |

| Conversion Rate | 3.8% | 4.9% | 28.9% |

| Revenue Lift | - | - | 27.6% |

Reality Check

While these results are impressive, we made sure to advise the client that they shouldn't count on a 28.9% conversion rate lift with a 27.6% revenue increase. Test period results can sometimes show exaggerated outcomes due to sample variability and the shorter measurement window.

What's most important here is that the test version performed statistically better than the control with 99% confidence. If you wanted a more precise estimate of long-term revenue lift, you'd need to run the test longer—but this comes with trade-offs. Longer tests delay rolling out the winning version, and in this case, the opportunity cost of waiting wasn't worth it given the clear statistical significance.

Why It Worked 🎯

By positioning the premium option first, we created an "anchoring effect" where users calibrated their expectations based on the higher price point, making the standard option appear as a compromise rather than a default.

Let's Talk 💬

Want to see how our analytical approach can boost your conversion rates and revenue? Get in touch for a free strategy session where we'll break down your current funnel and identify quick wins for immediate growth.

Appendix: Detailed test parameters and statistical analysis

Test Parameters

| Parameter | Value |

|---|---|

| Devices | Desktop, tablet, and mobile |

| User Segments | All visitors to product page |

| Duration Criteria | Statistical significance or minimum of two weeks |

| Tracking | Unique promo codes for each product's CTA buttons |

| Variation Elements | Product display order only |

Statistical Analysis

| Statistical Summary Metrics | Value |

|---|---|

| Confidence level | 99% |

| Z-score | 3.85 |

| P-value | 0.0000597 |

| Conservative lift estimate | 22% |

| Sample size | 22,106 users combined |

| Test duration | 25 days |

| Participation rate | Not provided |

Frequently Asked Questions

Does product ordering on a pricing page really affect which tier customers choose?

Yes, substantially. For an enterprise cybersecurity vendor, simply swapping the display order so the premium "Expert" tier appeared before the standard "Pro" tier increased conversion rate by 28.9% (from 3.8% to 4.9%) and revenue by 27.6%, with 99% statistical confidence. Position creates a visibility advantage that can outweigh feature differences.

How does this apply to SaaS pricing pages with multiple tiers?

The principle of "show the preferred tier first" applies broadly to 2-3 tier SaaS pricing layouts. But the result depends on what "preferred" means - premium-first works when you want to drive higher ARR; middle-tier anchoring works when you want to drive volume. Test based on your revenue goals, not assumptions about which tier customers should pick.

Is this anchoring effect or just visibility bias?

Both mechanisms contribute. Anchoring frames the premium tier as the reference point, making the Pro tier feel like the "economy" option. Visibility bias means customers often select the first option they evaluate when other factors are similar. We can't fully disentangle the two in this test, but the 27.6% revenue lift suggests the combined effect is significant.

Should B2B cybersecurity companies always lead with the premium tier?

Not universally. This result applies when the premium tier has genuine additional value and the audience can afford it. For price-sensitive segments or crowded markets where buyers comparison-shop aggressively, leading with a "popular" middle tier may convert better. Test with your specific buyer profile.

How does product positioning testing differ for B2C vs B2B SaaS?

B2C often responds to urgency and social proof cues in product ordering (best seller, most popular). B2B responds more to capability framing and decision-making ease - showing the tier most businesses need first, versus making buyers evaluate a long feature matrix. This cybersecurity test worked partly because it reduced decision friction: showing the preferred option first cut cognitive load.

Field Notes

Field Notes