In a 3-month CRO testing program, ConversionTeam delivered 5 winning A/B tests out of 6 (an 83% win rate), driving $531K in best-estimate annualized revenue for this audio streaming SaaS. The flagship email verification flow redesign alone produced a 40.85% measured lift on paid signups.

Client Background

The client is a global leader in online audio streaming, operating a SaaS platform that serves millions of listeners worldwide while enabling creators to launch and run their own radio stations. The business model is freemium with paid subscription tiers covering advanced broadcasting features, monetization tools, and mobile apps.

The Challenge

The client's homepage and conversion funnel were oriented toward listeners rather than paying creators. Station creators, the paying customer segment, had to navigate past listener-focused content to find creator-relevant features and calls-to-action. A 27% drop-off at the email verification step was also identified as a critical funnel leak.

Objectives

Reduce creator signup friction, shift homepage messaging to speak to the paying customer segment, and validate conversion-improving concepts before committing engineering resources to native implementation. The overarching goal was to increase paid station creation through data-driven testing.

Our Solution

The engagement started with a comprehensive CRO Audit, delivering 21 prioritized recommendations across the homepage, registration flow, pricing page, and technical performance. The audit methodology included user testing on the client's site and competitors, competitive analysis, GA4 funnel mapping, and a site speed and accessibility review.

Following the audit, the engagement moved into an active A/B testing program using a client-side testing tool to validate high-impact recommendations before any code changes were shipped to the production site.

A/B Testing Program Highlights

Across the engagement, we ran 6 A/B tests covering the highest-leverage parts of the conversion funnel:

- Homepage value proposition and creator-focused messaging

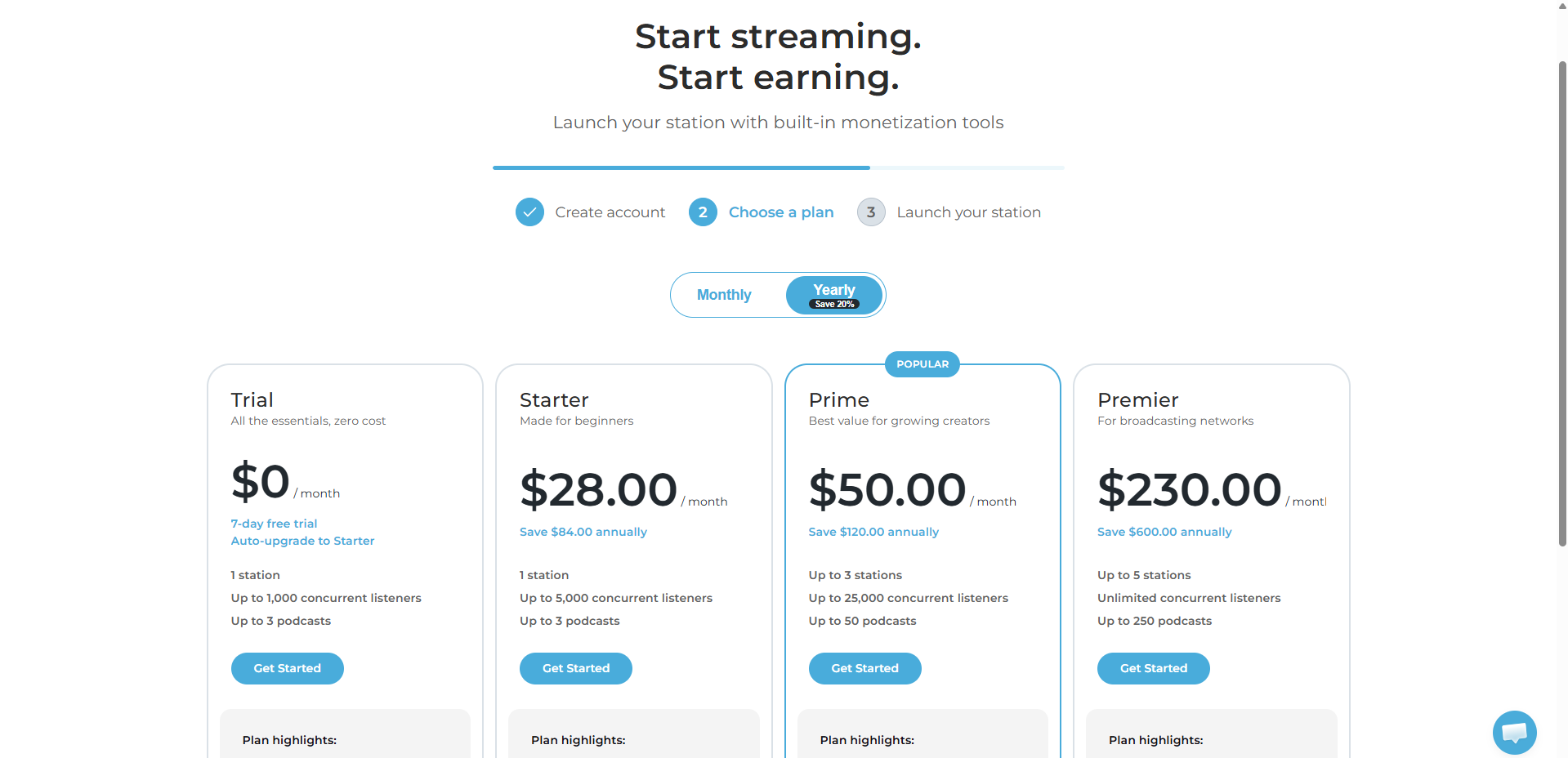

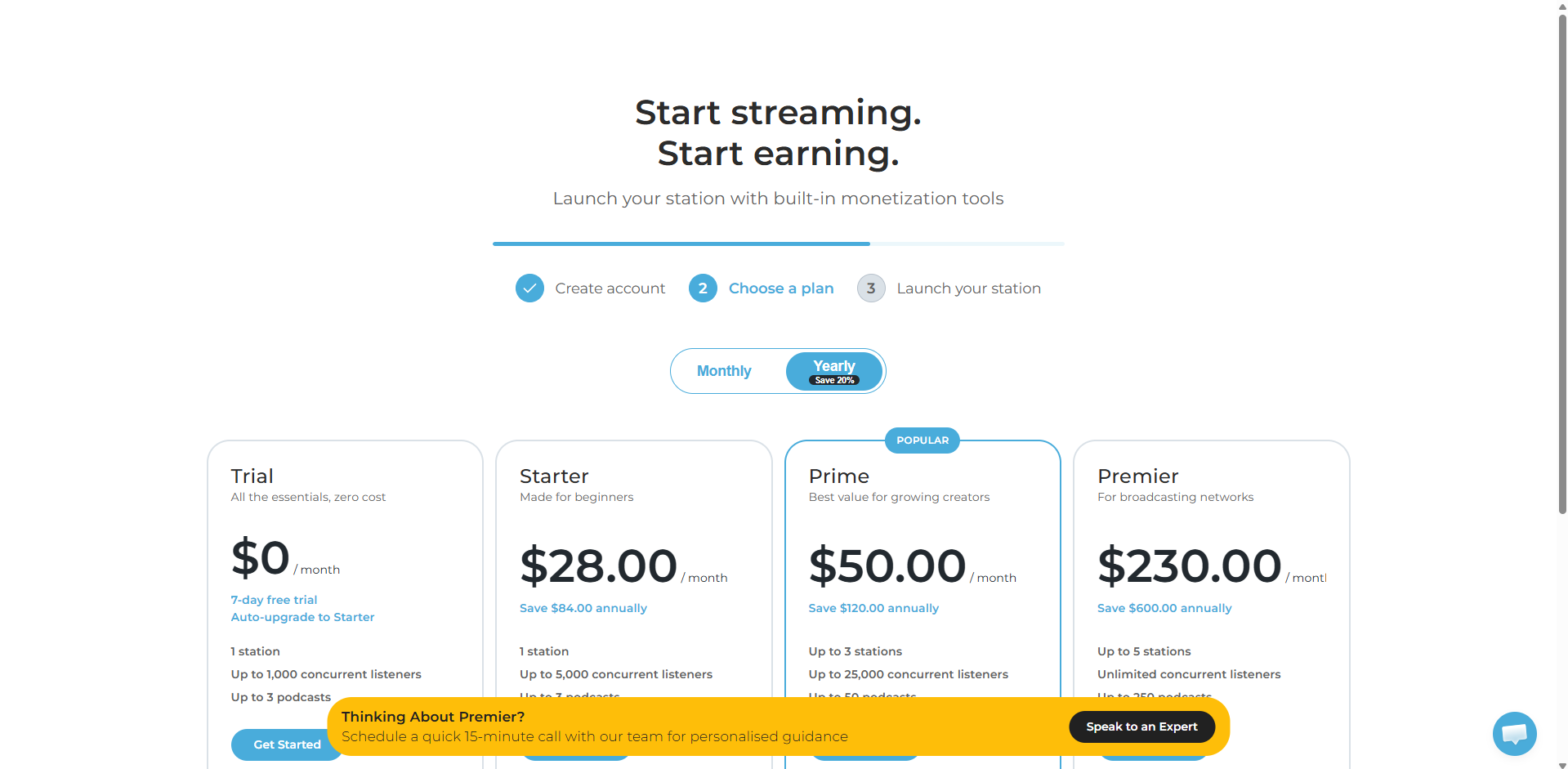

- Plans page copy and layout

- Signup and registration flow redesign

- Email verification placement

- Premium plan consultation CTA

- Trust and scarcity elements

The three featured tests below illustrate the range and depth of the program. The program's full results, including all winning and losing tests, are summarized at the end.

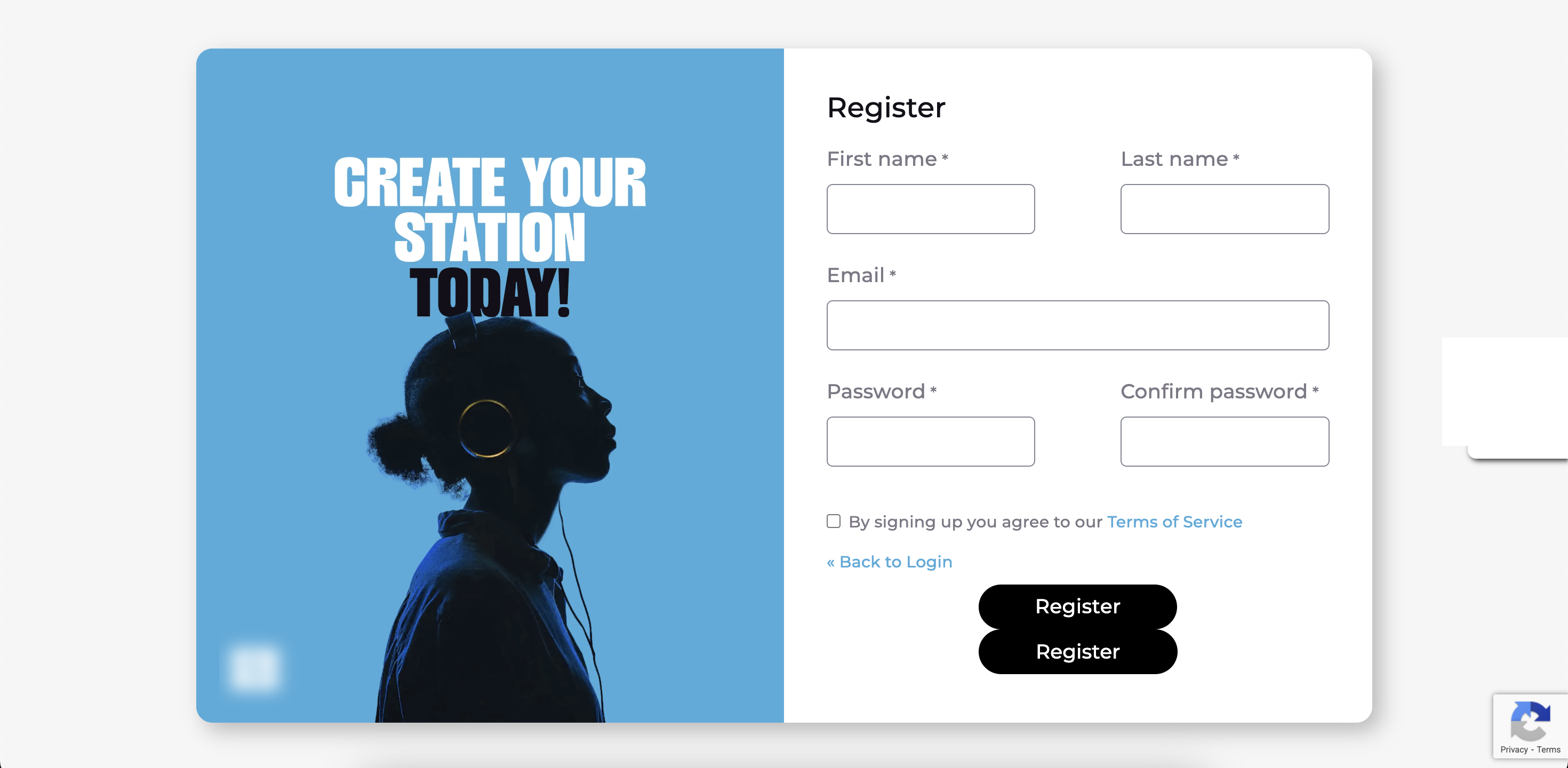

Background

Analytics showed 27% of users abandoned at the email verification step, which appeared mid-registration before users had committed to a plan. The verification step interrupted momentum at the worst possible moment.

Hypothesis

Moving email verification to later in the flow, after users select a plan, would reduce drop-off and increase paid signups by removing friction before commitment.

Methodology

The test restructured the signup flow so users proceeded directly from account creation to plan selection, with email verification happening after plan commitment. Required a custom web service to hold signup data client-side during the flow.

Results

The test was a strong winner across every funnel metric. Paid station creation lifted 40.85% (measured) with a 9.08% conservative lift. Account creation saw statistically significant gains, and click-to-plan-select also improved.

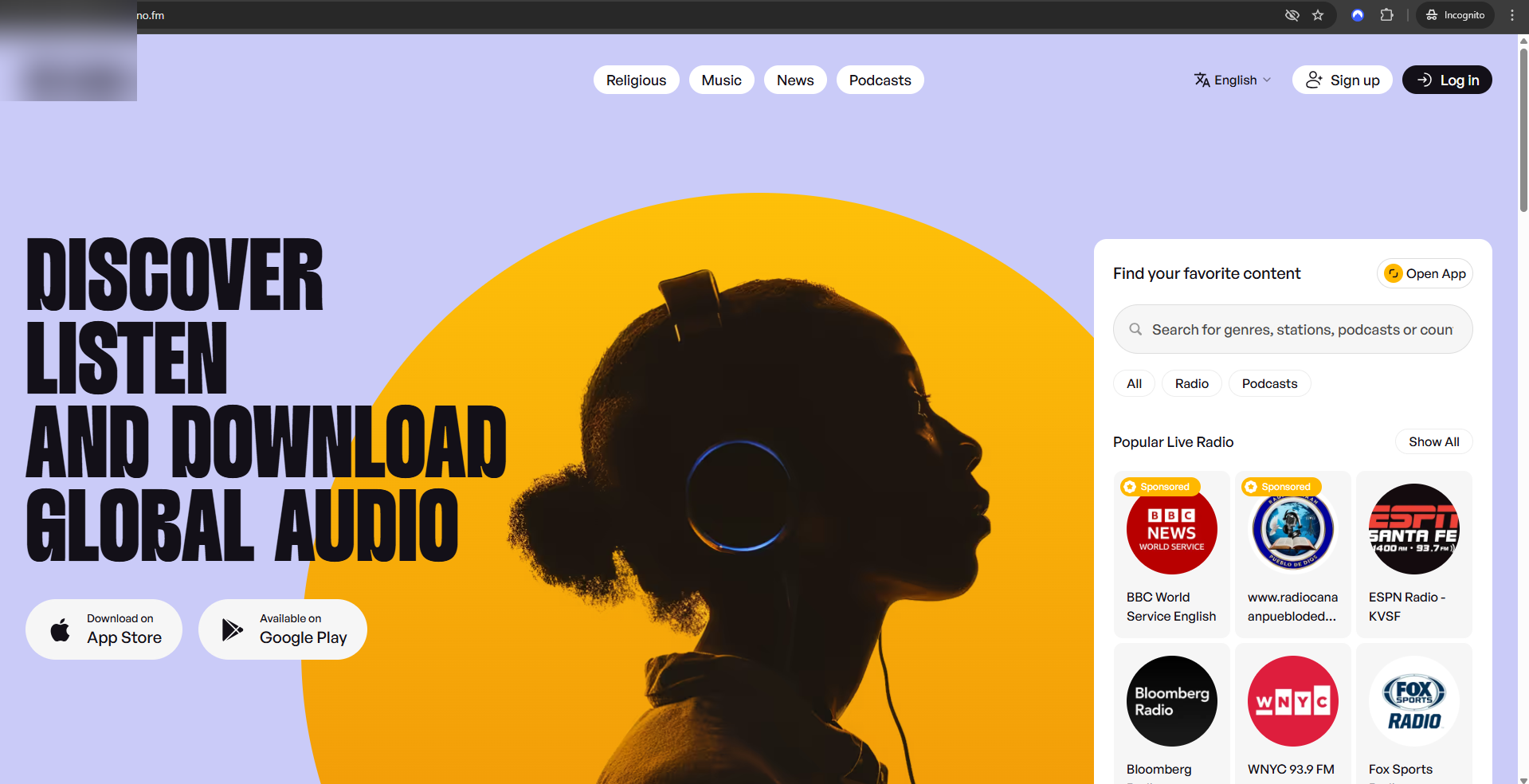

Background

The homepage led with listener-focused copy ("Discover, Listen, and Download Global Audio"), but the ideal customer profile was station creators. The value proposition for creators was absent from the most prominent real estate on the page.

Hypothesis

Shifting the homepage headline to speak directly to creators, while still allowing a listener path, would improve paid station creation from homepage visitors.

Methodology

A three-variant test ran against the listener-focused control. Variants ranged from dual-audience messaging ("Listen to global radio or create your own") to purely creator-focused ("Create your radio station, reach a global audience").

Results

The dual-audience variant emerged as the clear winner at 6.10% measured lift, while the two purely creator-focused variants lost against control. The takeaway: creator-focused framing works best when the listener audience is still acknowledged.

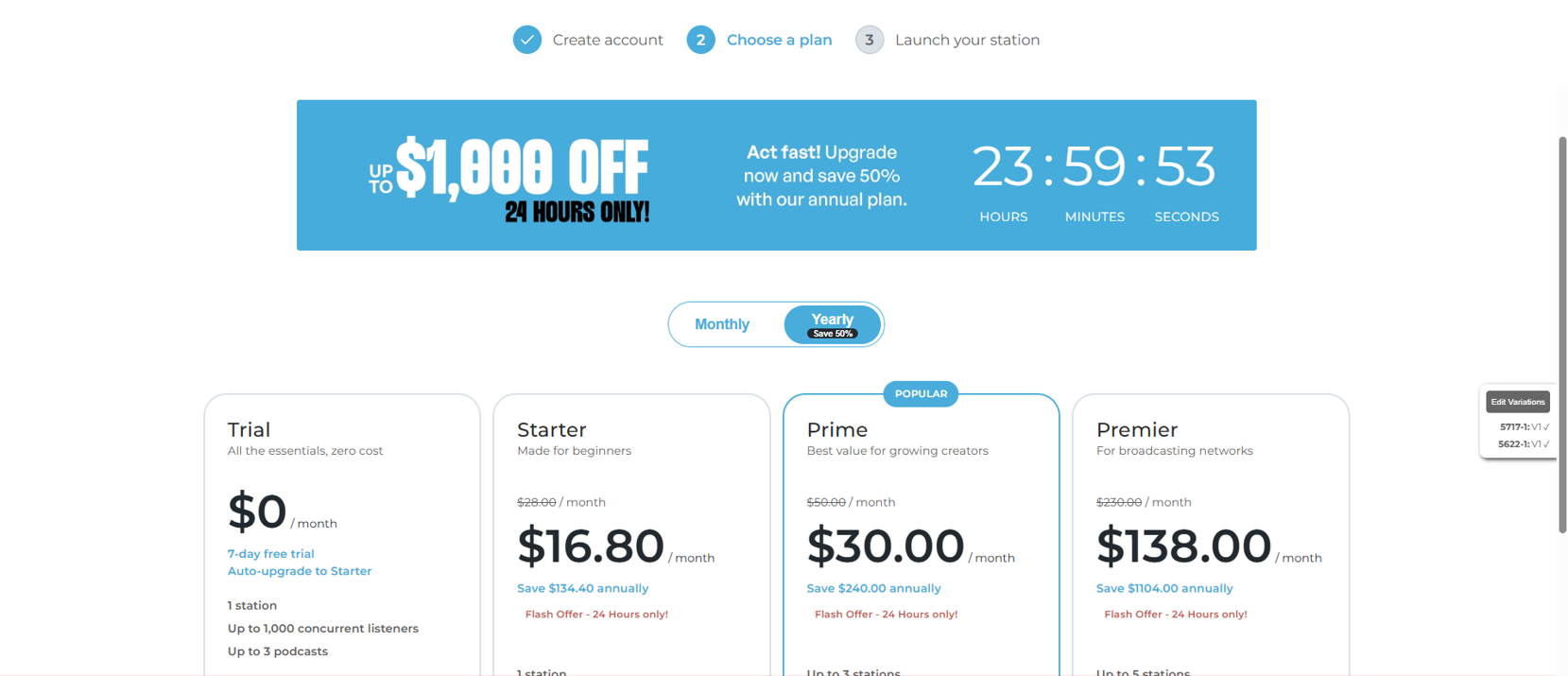

Background

The top-tier plan had meaningful features and support, but conversion to that tier was low on the plans page. Many high-intent creators were not upgrading because they had unanswered questions about fit.

Hypothesis

Offering a "white glove" consultation CTA directly on the plans page would give undecided high-intent users a clear path to human help, reducing drop-off and improving qualified pipeline.

Methodology

A "Thinking about Premier? Speak to an Expert" CTA was added to the plans page, replacing a generic promotional banner. The test measured both CTA click rate and downstream station creation.

Results

Statistically significant winner for CTA clicks, with a 5.64% measured lift on paid station creation. The pattern validated that high-intent visitors respond strongly to a human-touch offer at the point of decision.

Program Results

This was a 3-month CRO engagement: a comprehensive audit followed by an active A/B testing program that ran 6 tests in sequence across the highest-leverage parts of the conversion funnel.

Five of the six tests won, an 83% win rate. The combined annualized revenue impact of the program was over $531,000 in best-estimate revenue and $231,000 in conservative revenue, anchored by the flagship email verification test but compounded across multiple wins on the homepage, plans page, and signup flow.

Beyond the measured lifts, the engagement also delivered a full CRO audit with 21 prioritized recommendations, GA4 funnel tracking improvements (including paid conversion event setup across multiple properties), and a validated messaging strategy for the creator audience.

The program shows how a sustained testing engagement, not a single test, is what produces meaningful SaaS revenue growth, and how client-side test results translate into a clear backlog of engineering priorities for native implementation.

"This engagement is a textbook example of why CRO works as a program, not a project. Six tests in three months. Five winners. The flagship email verification redesign carried most of the revenue impact, but the other four wins compounded. No single test in isolation could have produced this combined result. That's the case for sustained testing engagements over one-off projects."

Frequently Asked Questions

What is an engagement-level CRO case study?

What was tested in this audio streaming SaaS CRO engagement?

Which test had the biggest impact?

How long does a CRO testing program need to run to produce results?

What's the difference between conservative and best-estimate revenue impact?

How does ConversionTeam decide what to test?